How your scanner uses OCR to read printed text, and where the magic happens

Optical Character Recognition, often abbreviated OCR, is one of the most important pieces of technology that’s used in modern scanning and image capture: It’s what allows machines to “read” what’s printed or written on a piece of paper. Yet, despite its presence in hundreds of our daily activities, it’s something we rarely even notice.

As a scanner manufacturer, we actually think that’s a good thing, since quietly doing your job in the background without any mistakes is exactly what you want from a machine. But any time you’ve scanned a receipt on your 3-in-1 home printer, deposited a check at an ATM, used the camera function on Google Translate, or even put a dollar bill into a soda machine, chances are some type of OCR technology was at work.

How Does OCR Work? The Basics:

So, how does a machine turn a bunch of camera data into readable text? In the simplest terms, it’s a clever piece of reverse engineering. A scanned or photographed image is run through an OCR engine that identifies groups of pixels based on their contrast with the background, and then compares them against a library of known fonts to see if any of them match. If a set of pixels matches closely enough, it’s assigned a confidence score, and if it passes with a high enough certainty, it’s recorded as a text character.

Pretty simple, right? Well, it would be, if only things were always that straightforward. In real-world settings, though, it’s not usually practical to run a comprehensive OCR test on every part of every document.

What that means is: If you’re scanning a check at a bank, you’re not going to be looking for the same things that you would be if you were scanning a driver’s license or a receipt. So, depending on what you’re trying to read and what you’re going to do with the OCR data, you’re likely to end up with lots of OCR “mini engines” built for very specific purposes.

One Size Does Not Fit All

Our own check scanners are actually a great example of how OCR becomes specialized to do a particular job. When you deposit a check at the bank, it goes through two completely different OCR engines in the course of being converted to an image.

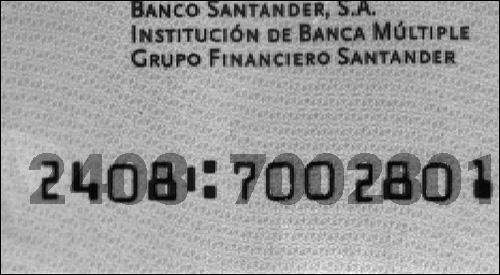

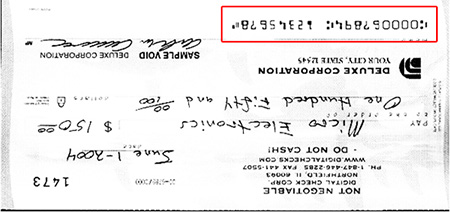

Once you put a check through the scanner, the first thing that happens is that the image is put through a very basic OCR analysis, either onboard the scanner itself or on the PC it’s directly attached to. These are limited, functional tests that are part of Digital Check’s own internal OCR engine. Some of the things it might do are: Taking an OCR reading of the magnetic MICR line so those characters can be compared against the magnetic reading; recognizing upside-down checks if the printing on the bottom is out of place; or identifying certain other documents (mostly used internationally) that have non-magnetic control lines.

Since the kinds of OCR tests done by our own engine are only the ones strictly necessary for the operation of the scanner, it’s naturally going to be very stripped-down compared to a “full” engine that handles more advanced functions. For example, it only uses a handful of fonts in its library: the E13B and CMC7 MICR fonts; OCR-A and OCR-B; a few miscellaneous fonts; and some limited barcode support.

Another important characteristic of the OCR engine in a check scanner is that it’s programmed only to look for certain characters on certain parts of the check, not to analyze the whole document. That means it’ll read a couple of half-inch height bands across the face of the document, while ignoring the rest of the check where things like the dollar amount and signature would be printed. That cuts down the area it analyzes by a good 80 percent or more, and since we’re only looking for a few fonts, it eliminates 99 percent of the work.

That kind of streamlined ability is necessary in order to keep the engine to a manageable size to fit into the scanner API, and also so that it can quickly be run on hundreds of documents in real time. The scanner API performs basic functional OCR on a check in 20 to 30 milliseconds – fast enough to keep up with a continuous-feed machine – whereas doing a full analysis of the entire document would be slower than the scan speed. You might find other similar “front-end” OCR engines that handle the essentials for their particular types of devices.

When it comes to the more elaborate tests – recognizing printing in any possible font, or the Holy Grail of OCR, deciphering handwriting such as the kind that’s often found in the courtesy and legal dollar amounts of a check (known as a “CAR/LAR engine” on checks) – then things get a lot more complicated. That requires a serious OCR engine written by a software company that specializes in it. Orbograph and Mitek’s A2iA are two examples of companies that do this.

Those kinds of full OCR engines, at least in the banking world, are either built into the bank’s main software package or run alongside it. They’ll use complex algorithms that can identify thousands of different fonts, as well as handwritten block and cursive letters, and account for all sorts of different situations as they read the key information printed on the check. It’s a whole different animal from what can run on a scanner, but hopefully you can see how both are good at the jobs they’re designed for!

We hope you’ve enjoyed this look into the interesting but largely unknown world of scanners and OCR, and that we’ve helped you understand a little bit more about the silent processes in the background that have helped machines learn how to read.